Microsoft announced on Thursday that it’s launching Copilot Health, a “separate, secure space” in Copilot for asking questions about lab results and medical records, searching for providers, analyzing data from wearables, and other health-related chats. The feature will have a phased rollout, so it won’t be available to everyone immediately, but users can join a waitlist to get access.

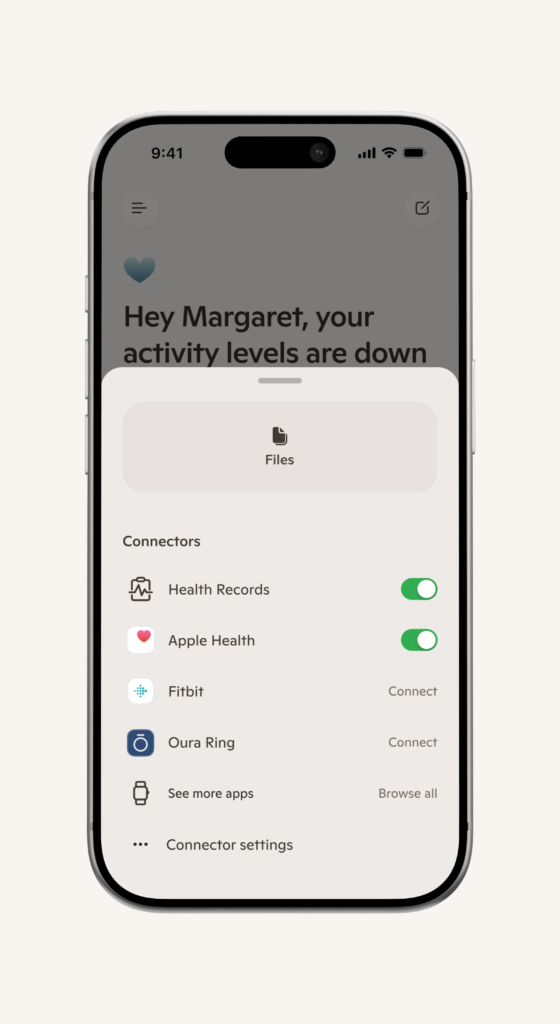

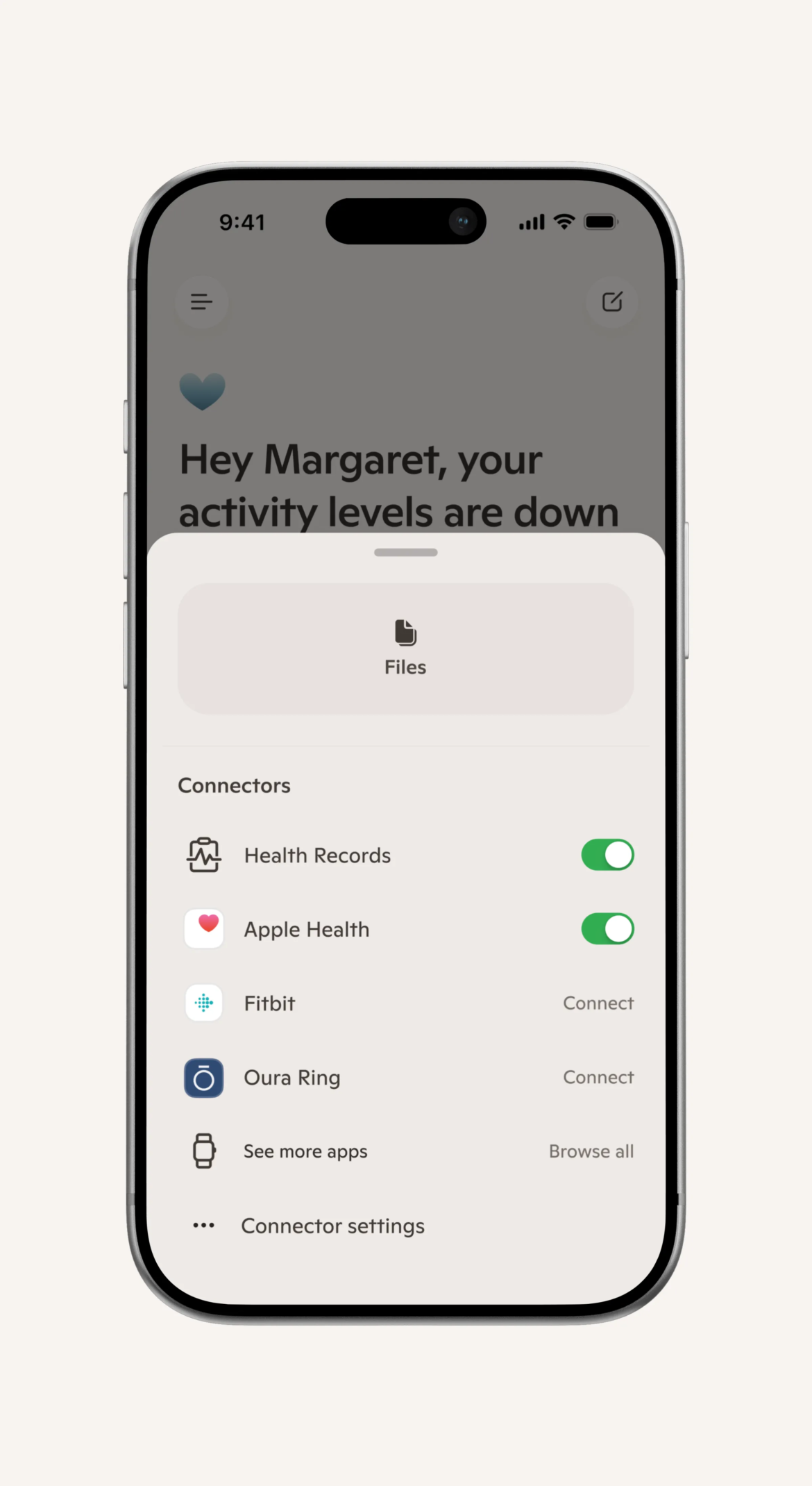

Microsoft says Copilot Health “doesn’t replace your doctor” and isn’t intended for providing medical diagnoses or treatment, but rather helping users understand their health data. Users can import medical records from over 50,000 US hospitals and healthcare organizations through HealthEx, and import lab test results through Function. Copilot Health is also compatible with “over 50 wearable devices,” including those from Apple, Oura, and Fitbit. The Copilot Health homepage can show data from wearables, like current step count, as well as reminders for upcoming appointments, depending on what data users choose to share.

1/3

Users can also find medical professionals through Copilot Health. It’s connected to “real-time US provider directories” that can help users search for providers based on specialty, location, languages spoken, and insurance plans accepted.

In its press release on Copilot Health, Microsoft states that it has “improved the quality and reliability of answers by elevating information from credible health organizations across 50 countries.” It also says responses in Copilot Health will include citations with links to sources and “expert‑written answer cards from Harvard Health.”

According to Microsoft, users’ chats in Copilot Health “are isolated from general Copilot and kept under additional access, privacy, and safety controls.” It also claims that data from Copilot Health chats isn’t used for training its AI models. Users are also able to delete their health data or disconnect data sources at any time, such as toggling off access to wearable data.

OpenAI launched a very similar feature in January called ChatGPT Health, which also offers an isolated sandboxed environment for medical chats, encourages users to connect their medical records, and doesn’t use health chats for model training. but, Microsoft does not currently have a HIPAA-compliant version of Copilot Health, unlike ChatGPT for Healthcare and Amazon’s Health AI, which was opened up to more users on Tuesday. Anthropic’s Claude for Healthcare is similarly “HIPAA-ready.”

When asked about HIPAA compliance in a briefing ahead of Thursday’s announcement, Dr. Dominic King, VP of health at Microsoft AI, stated: “HIPAA is not required for a direct-consumer experience like this when you’re using your own data.” The Health Insurance Portability and Accountability Act includes security requirements for protecting patients’ electronic health data and prohibits certain types of its usage and disclosure. Violators of HIPAA can face fines and potentially even a prison sentence. Since companies like Microsoft aren’t legally required to be HIPAA compliant, they’re not subject to the consequences that a hospital or doctor might face for violating a patient’s HIPAA rights. King added, “but, at Copilot, we think it’s incredibly important that we’re meeting all the best standards out there. So, we will be announcing some updates here on our standing in terms of what are called ‘HIPAA controls.’” King did not elaborate on what that exactly entails.

King also noted that Copilot Health has an ISO 42001 certification. ISO 42001 is an independent, international standard for AI systems that’s intended to promote “responsible use of AI” as well as “traceability, transparency, and reliability.” Microsoft 365 Copilot and Microsoft 365 Copilot Chat also have this certification.

but, even with that certification and any future intentions for voluntary HIPAA compliance, users may still want to be cautious about sharing their medical data with an AI. As experts have pointed out, AI companies can change their data privacy policies at any time. AI also has a history of giving users inaccurate or unsafe medical advice, and has an especially concerning track record when it comes to mental health.

- Stevie Bonifield

-

-

-

-

-

-

Source: www.theverge.com